What started as a Plex server has slowly grown into a full home infrastructure setup. I also use it as a testing ground for work - we run Proxmox internally, so having my own cluster to break helps me break theirs less often.

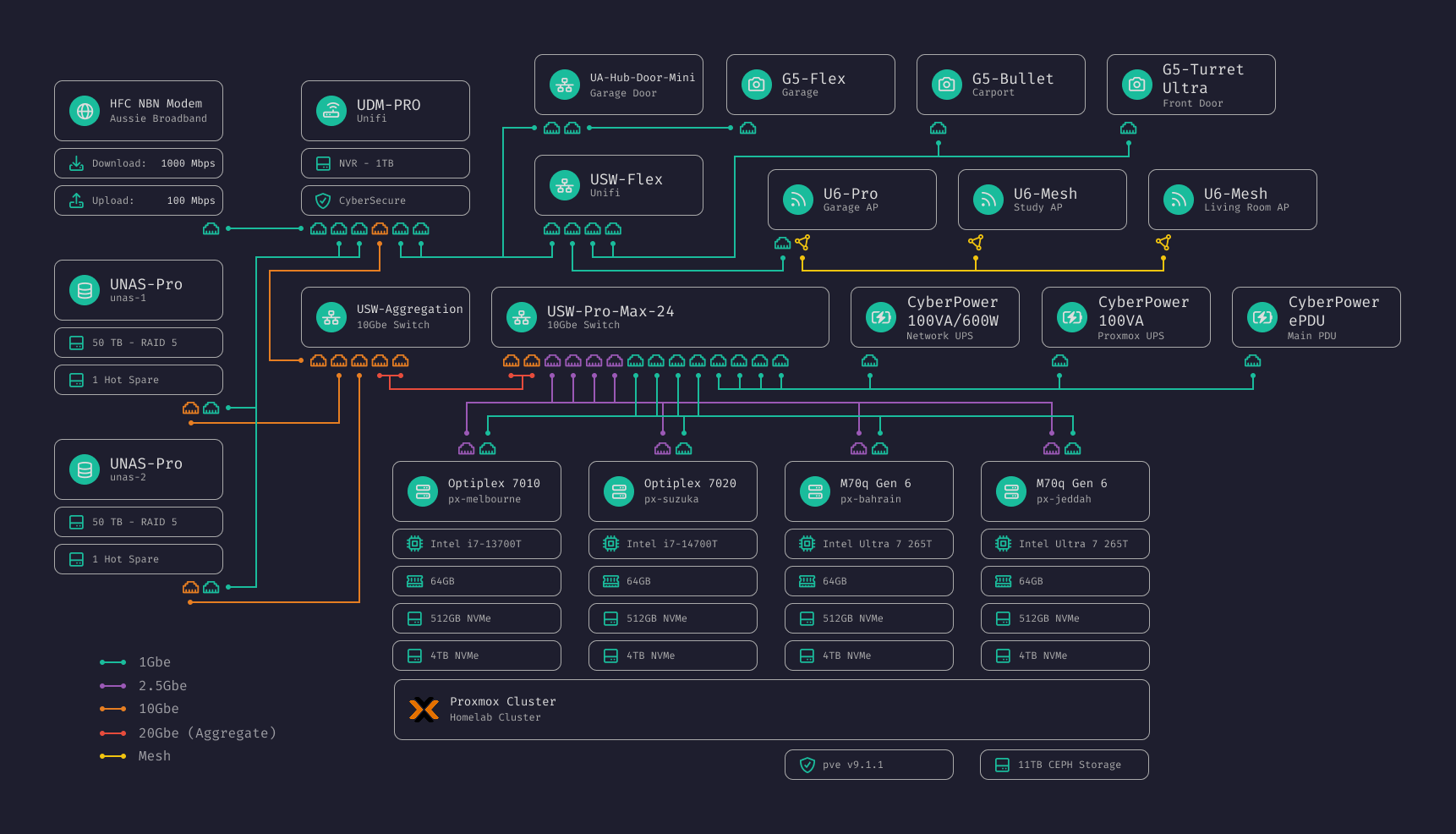

Network Diagram

Hardware

Networking

| Device | Role |

|---|---|

| UDM-Pro | Router, firewall, NVR |

| USW-Aggregation | 10Gbe backbone |

| USW-Pro-Max-24 | Main switch, Proxmox connectivity |

| USW-Flex | Camera switch |

| USW-Lite-8-PoE (x2) | Study and living room |

| U6-Pro | Primary AP |

| U6-Mesh (x2) | Mesh APs for study and living room |

| Unifi Door Hub Mini | Garage door control |

Compute (Homelab Proxmox Cluster)

Three micro PCs form a Proxmox cluster with ZFS storage across 12TB of NVMe. This cluster handles all home services but I also use it for work testing when I need more resources or want to test HA configurations.

| Node | Hardware | CPU | RAM | Storage | NIC |

|---|---|---|---|---|---|

pve-amber | Lenovo M70q Gen 6 | Ultra 7 265T (20t) | 64GB | 512GB + 4TB NVMe | 1Gbe + 2.5Gbe |

pve-lager | Lenovo M70q Gen 6 | Ultra 7 265T (20t) | 64GB | 512GB + 4TB NVMe | 1Gbe + 2.5Gbe |

pve-porter | Lenovo M70q Gen 6 | Ultra 7 265T (20t) | 64GB | 512GB + 4TB NVMe | 1Gbe + 2.5Gbe |

Each node has the built-in 1Gbe NIC plus a 2.5Gbe NIC added in place of the WiFi card. Boot drives hold ISOs and CT templates; the 4TB drives form a ZFS storage disk.

These sit in my server rack as 2x 1U mounts that hold 2 PCs each. I’m contemplating building a custom power supply to power the 3 nodes and fit it in the spare holder in the mounts. This should help clean up some cables and probably help with reduced power usage.

Storage

| Device | Config | Capacity | Purpose |

|---|---|---|---|

| UNAS-Pro (x2) | 7x 10TB RAID 5 + hotspare | ~50TB each | Media, backups |

Power

2x CyberPower OR1000ERM1U (1000VA/600W) protecting the core infrastructure.

Network

Internal services run on *.home.lachlancox.dev, resolved by the UDM-Pro’s

internal DNS. Public services are exposed through a reverse proxy on my main

domain.

VLANs

| VLAN | Name | Subnet | Purpose |

|---|---|---|---|

| 1 | Management | 192.168.1.0/24 | Infrastructure hardware |

| 2 | Internal Users | 10.10.20.0/24 | WiFi clients |

| 20 | Infrastructure Services | 192.168.30.0/24 | Proxmox, infra services |

| 21 | Internal Services | 192.168.32.0/24 | Internal-only services |

| 22 | Public Services | 192.168.33.0/24 | Internet-exposed services |

Services

| Service | Type | VLAN | Description |

|---|---|---|---|

infra-proxy | LXC | 20 | Caddy reverse proxy |

infra-auth | LXC | 20 | Authelia for SSO |

svc-plex | LXC | 22 | Plex media server |

svc-headscale | LXC | 22 | Self-hosted Tailscale control server |

svc-tandoor | LXC | 21 | Recipe management |

svc-actual | LXC | 21 | Actual Budget |

svc-media | VM | 21 | The arr stack (Docker) |

svc-uptime | LXC | 21 | Uptime Kuma |

Note: Metrics and visibility are basically non-existent right now. Planning to add Grafana for dashboards at some point.

Work (Separate Proxmox Cluster)

A dedicated single node cluster strictly for work.

| Node | Hardware | CPU | RAM | Storage | NIC |

|---|---|---|---|---|---|

pve-supermicro | Supermicro A+ Server E301-9D-8CN4 | AMD EPYC 3251 SoC (8c/16t) | 128GB | 128GB + 4TB NVMe | 1Gbe mgmt + 1Gbe |

The Supermicro is normally a 1.5U chassis but I custom fit it into a 1U for my rack.

Naming Convention

Proxmox nodes are named after beer styles: pve-{beer} (e.g., pve-amber,

pve-lager, pve-porter).

Services follow a prefix convention:

infra-{name}— Infrastructure services (VLAN 20)svc-{name}— Application services (VLAN 21/22)wrk-{name}— Work testing environments

Notes:

- Planning to adopt

svc-for internal (VLAN 21) anddmz-for public (VLAN 22) - Might add a second reverse proxy to separate internal and public traffic